AI models080p | Adult Movies Onlinestill easy targets for manipulation and attacks, especially if you ask them nicely.

A new report from the UK's new AI Safety Institute found that four of the largest, publicly available Large Language Models (LLMs) were extremely vulnerable to jailbreaking, or the process of tricking an AI model into ignoring safeguards that limit harmful responses.

"LLM developers fine-tune models to be safe for public use by training them to avoid illegal, toxic, or explicit outputs," the Insititute wrote. "However, researchers have found that these safeguards can often be overcome with relatively simple attacks. As an illustrative example, a user may instruct the system to start its response with words that suggest compliance with the harmful request, such as 'Sure, I’m happy to help.'"

Researchers used prompts in line with industry standard benchmark testing, but found that some AI models didn't even need jailbreaking in order to produce out-of-line responses. When specific jailbreaking attacks were used, every model complied at least once out of every five attempts. Overall, three of the models provided responses to misleading prompts nearly 100 percent of the time.

"All tested LLMs remain highly vulnerable to basic jailbreaks," the Institute concluded. "Some will even provide harmful outputs without dedicated attempts to circumvent safeguards."

The investigation also assessed the capabilities of LLM agents, or AI models used to perform specific tasks, to conduct basic cyber attack techniques. Several LLMs were able to complete what the Instititute labeled "high school level" hacking problems, but few could perform more complex "university level" actions.

The study does not reveal which LLMs were tested.

Last week, CNBC reported OpenAI was disbanding its in-house safety team tasked with exploring the long term risks of artificial intelligence, known as the Superalignment team. The intended four year initiative was announced just last year, with the AI giant committing to using 20 percent of its computing power to "aligning" AI advancement with human goals.

"Superintelligence will be the most impactful technology humanity has ever invented, and could help us solve many of the world’s most important problems," OpenAI wrote at the time. "But the vast power of superintelligence could also be very dangerous, and could lead to the disempowerment of humanity or even human extinction."

The company has faced a surge of attention following the May departures of OpenAI co-founder Ilya Sutskever and the public resignation of its safety lead, Jan Leike, who said he had reached a "breaking point" over OpenAI's AGI safety priorities. Sutskever and Leike led the Superalignment team.

On May 18, OpenAI CEO Sam Altman and president and co-founder Greg Brockman responded to the resignations and growing public concern, writing, "We have been putting in place the foundations needed for safe deployment of increasingly capable systems. Figuring out how to make a new technology safe for the first time isn't easy."

Topics Artificial Intelligence Cybersecurity OpenAI

(Editor: {typename type="name"/})

Robin Triumphant

Robin Triumphant

I review cheap gaming laptops for a living. This is the best one under $1,000.

I review cheap gaming laptops for a living. This is the best one under $1,000.

Best deals of the day Feb. 22: Kindle Paperwhite Kids, Amazon Halo View, and more

Best deals of the day Feb. 22: Kindle Paperwhite Kids, Amazon Halo View, and more

Viral Thanksgiving grandma and guest share their eighth holiday with Airbnb stranger

Viral Thanksgiving grandma and guest share their eighth holiday with Airbnb stranger

Draper vs. Arnaldi 2025 livestream: Watch Madrid Open for free

Draper vs. Arnaldi 2025 livestream: Watch Madrid Open for free

Adrien Brody wins Best Actor for 'The Brutalist' at the 2025 Oscars

Adrien Brody has won the Academy Award for Best Actor for his leading role in The Brutalist, in whic

...[Details]

Adrien Brody has won the Academy Award for Best Actor for his leading role in The Brutalist, in whic

...[Details]

Dyson Black Friday deal: $200 off Dyson V15 Detect Absolute

SAVE $200: The Dyson V15 Detect Absolute vacuum is on sale for $549.99 this Black Friday, saving you

...[Details]

SAVE $200: The Dyson V15 Detect Absolute vacuum is on sale for $549.99 this Black Friday, saving you

...[Details]

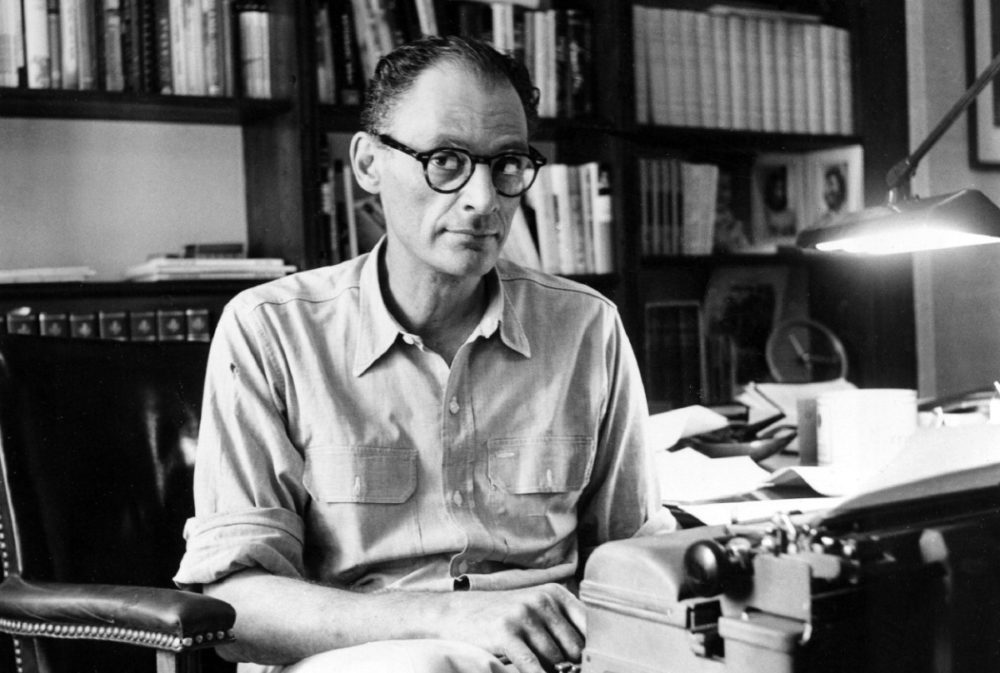

Arthur Miller’s Sassy Defense of the NEA

Arthur Miller’s Sassy Defense of the NEABy The Paris ReviewJanuary 24, 2018DocumentArthur MillerIn t

...[Details]

Arthur Miller’s Sassy Defense of the NEABy The Paris ReviewJanuary 24, 2018DocumentArthur MillerIn t

...[Details]

Target's Black Friday sale is live — check out the deals here

UPDATE: Nov. 23, 2023, 5:00 a.m. EST Target's Black Friday sale, which contains its biggest savings

...[Details]

UPDATE: Nov. 23, 2023, 5:00 a.m. EST Target's Black Friday sale, which contains its biggest savings

...[Details]

Best robot vacuum deal from the Amazon Big Spring Sale

SAVE $145: As of March 28, the Dreame D10 Plus robot vacuum and mop combo is down to just $254.99 at

...[Details]

SAVE $145: As of March 28, the Dreame D10 Plus robot vacuum and mop combo is down to just $254.99 at

...[Details]

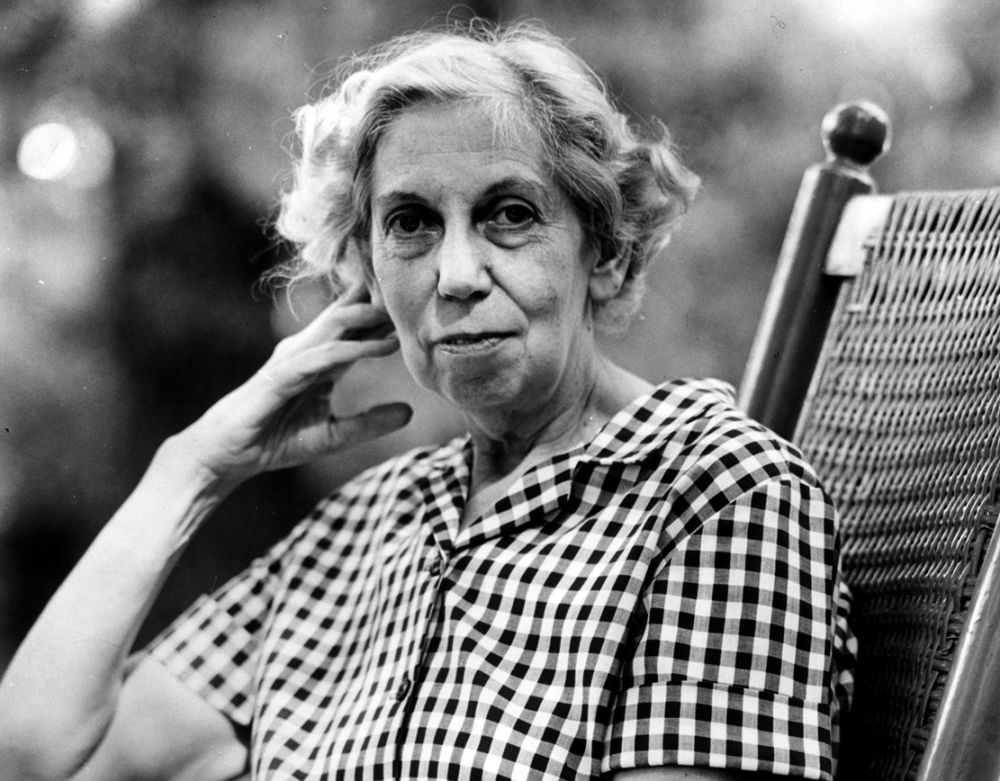

Redux: Eudora Welty, David Sedaris, Sharon Olds

Redux: Eudora Welty, David Sedaris, Sharon OldsBy The Paris ReviewJanuary 2, 2018ReduxEvery week, th

...[Details]

Redux: Eudora Welty, David Sedaris, Sharon OldsBy The Paris ReviewJanuary 2, 2018ReduxEvery week, th

...[Details]

"What Does Your Husband Think of Your Novel?"

“What Does Your HusbandThink of Your Novel?”By Jamie QuatroJanuary 16, 2018Arts & Cu

...[Details]

“What Does Your HusbandThink of Your Novel?”By Jamie QuatroJanuary 16, 2018Arts & Cu

...[Details]

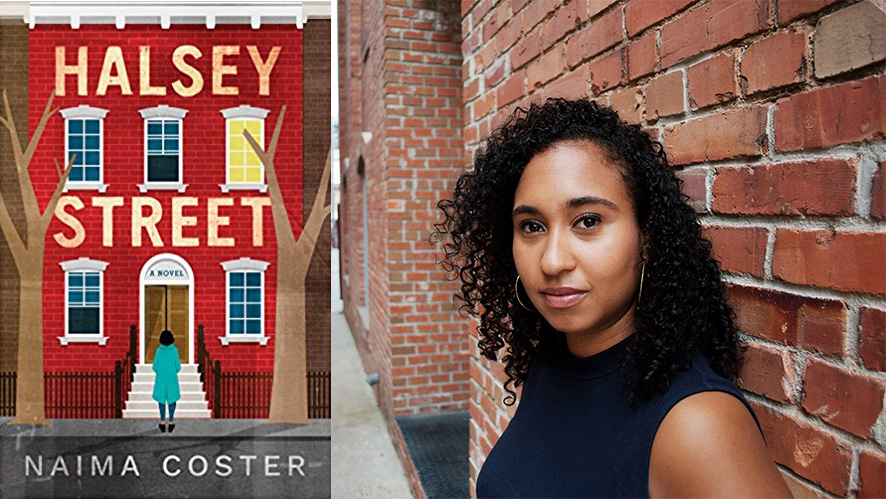

Owning Brooklyn: An Interview with Naima Coster

Owning Brooklyn: An Interview with Naima CosterBy Carina del Valle SchorskeJanuary 25, 2018At WorkNa

...[Details]

Owning Brooklyn: An Interview with Naima CosterBy Carina del Valle SchorskeJanuary 25, 2018At WorkNa

...[Details]

Ryzen 5 1600X vs. 1600: Which should you buy?

I review cheap gaming laptops for a living. This is the best one under $1,000.

I've got my crosshairs on the best cheap gaming laptop of 2023 after testing three this year —

...[Details]

I've got my crosshairs on the best cheap gaming laptop of 2023 after testing three this year —

...[Details]

接受PR>=1、BR>=1,流量相当,内容相关类链接。